Executives across various industries are under pressure to reach insights and make decisions quickly. This is driving the importance of streaming data and analytics, which play a crucial role in making better-informed decisions that likely lead to faster, better outcomes.

While traditional systems store and process data in batches, streaming data refers to data that is continuously generated from a variety of sources. Data streaming — the process of continuously capturing and processing data at a certain speed — can encompass a broad range of latency, depending on business needs. Contrary to the traditional perception that “streaming must be in milliseconds,” streaming is a spectrum of latency that handles continuous streams of data in seconds, minutes or even hours.

However, the process of data streaming presents challenges. For data to have business value, it needs to be ingested from diverse sources at low latency. But this is often associated with high complexity and cost, forcing organizations to strike a balance between latency and cost.

Leaders across industries are addressing these challenges and preparing for future business requirements with Snowflake’s simplified solution, which combines streaming and batch pipelines in a single layer of architecture. By leveraging Snowflake and collaborating with partners such as AWS to centralize their data in a single platform, organizations are achieving more cost-effective streaming.

In our new ebook, The Modern Data Streaming Pipeline, Snowflake engaged with dozens of customers across seven diverse sectors — financial services, manufacturing, healthcare, cybersecurity, retail, advertising and telecommunications — to explore their most common streaming use cases and examine their architecture choices for optimizing performance and efficiency.

Let’s use manufacturing as an example. Data streaming helps manufacturing companies ingest critical data from across the value chain, such as sensor readings from production equipment, inventory levels, supplier performance metrics and customer demand patterns. This continuous flow of data opens opportunities for manufacturers, enabling them to generate new revenue streams with user behavior data, reduce operating costs by detecting potential issues and improve product quality through equipment performance data.

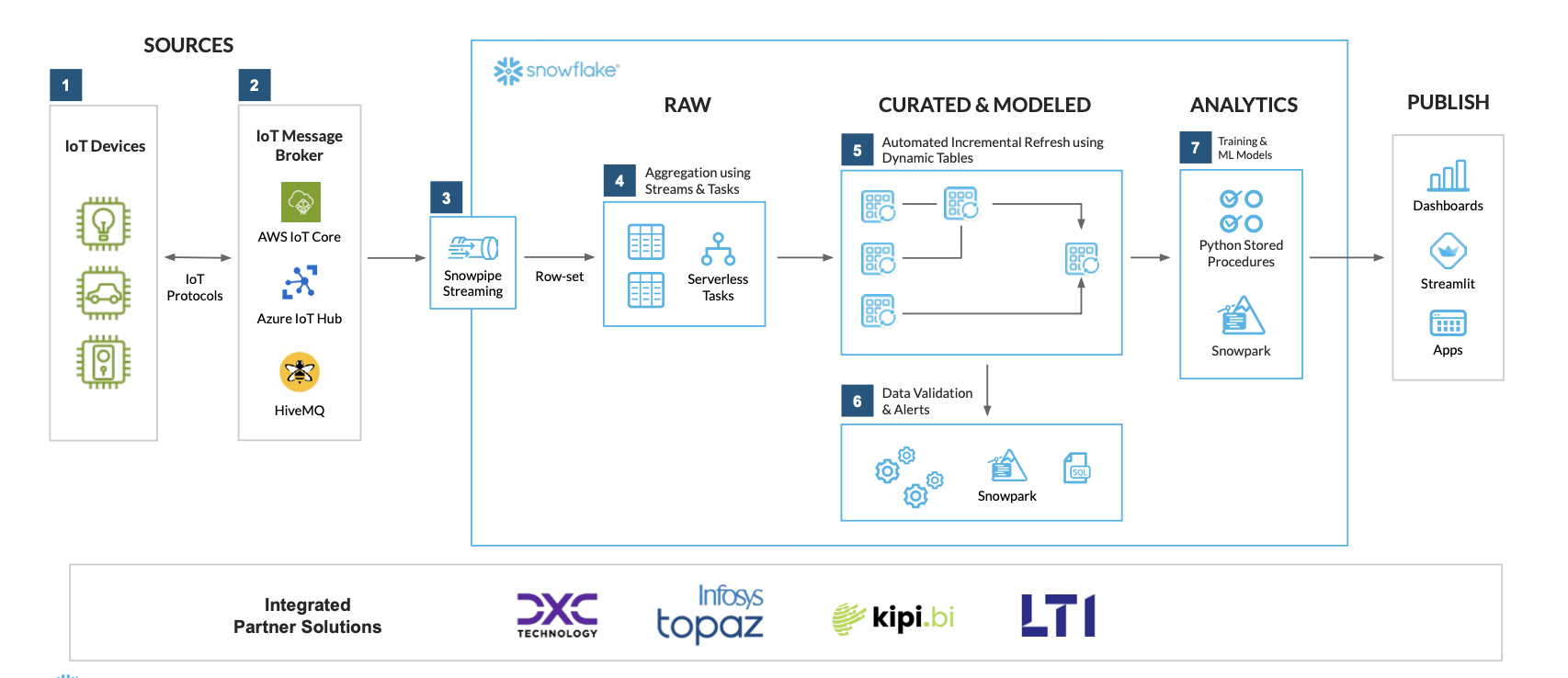

Here’s a manufacturing reference architecture for IoT analytics to illustrate what this can look like in Snowflake:

Manufacturing partners such as DXC, Infosys, Kipi and LTI offer IoT solutions integrated with Snowflake’s streaming capabilities.

And that’s just the beginning. Other customers across this and the other six industries shared how they’re using Snowflake for their top streaming analytics use cases — from advanced personalization in retail, to medical IoT device ingestion in healthcare, to regulatory reporting in financial services. Their recommended streaming reference architectures can help optimize performance and efficiency for these high-demand use cases.

To discover how organizations in your industry are using Snowflake for streaming analytics — and realizing significant gains in the process — download our ebook: The Modern Data Streaming Pipeline: Top Streaming Architectures and Use Cases Across 7 Industries.

The post The Modern Data Streaming Pipeline: Streaming Reference Architectures and Use Cases Across 7 Industries appeared first on Snowflake.

Credit: Brady Snyder / Android Authority TL;DR Google’s new compute-based Gemini limits are frustrating users who say they are hitting […]

Snowflake now supports dbt Fusion as a selectable version on dbt Projects, designed to improve compilation times for many builds, […]

Brand relevance is now defined by what we see and what we hear. From the high-energy “vibes” of a short-form […]